Ph.D. Candidate: Demet Demir

Program: Information Systems

Date: 10.07.2024 / 15:30

Place: A-212

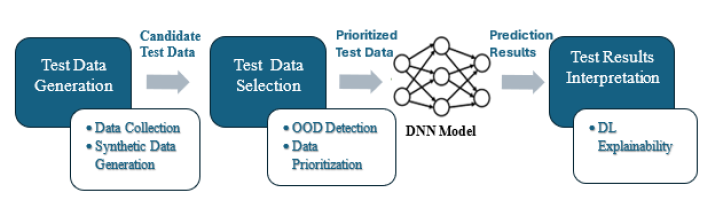

Abstract: In this thesis, we introduce a testing framework designed to identify fault-revealing data in Deep Neural Network (DNN) models and determine the causes of these failures.

Given the data-driven nature of DNNs, the effectiveness of testing depends on the adequacy of labeled test data. We perform test data selection with the goal of identifying and prioritizing the test inputs that will cause failures in the tested DNN model. To achieve this, we prioritized the test data based on the uncertainty of the DNN model. Initially, we employed state-of-the-art uncertainty estimation methods and metrics, followed by proposing new ones. Lastly, we developed a novel approach using a meta-model that integrates multiple uncertainty metrics, overcoming the limitations of the individual metrics and enhancing effectiveness in various scenarios.

The test data distribution significantly impacts DNN performance and is critical in assessing test results. Therefore, we generated test datasets with a distribution-aware perspective. We propose to first focus on in-distribution data for which the DNN model is expected to make accurate predictions and then include out-of-distribution (OOD) data. Furthermore, we investigated post-hoc explainability methods to identify the causes of incorrect predictions. Visualization techniques in DL explainability provide insights into the reasons for incorrect decision-making of DNNs. However, it requires detailed manual assessment.

We evaluated the proposed methodologies using image classification DNN models and datasets. The results show that uncertainty-based test selection effectively identifies fault-revealing inputs. Specifically, test data prioritization using the meta-model approach outperforms existing state-of-the-art methods. As a result, we conclude that using prioritized data in tests significantly increases the detection rate of DNN model failures.